|

注:本文针对64位机器,32bit课直接tar -zxvf hadoop-2.2.0.tar.gz 解压配置即可。 Step1:安装jdk(6以上版本) Step2:下载hadoop--->http://mirror.esocc.com/apache/hadoop/common/hadoop-2.2.0/选择hadoop-2.2.0-src.tar.gz 进行源码编译安装。 为什么选择源码编译安装而不是选择hadoop-2.2.0.tar.gz 安装,此时你可以使用如下命令查看 [root@cloud003 hduser]# file hadoop-2.2.0/lib/native/* ##看到没官方提供的是32bit的,可我们的机器是64bit的所以我们必须使用源代码编译安装 hadoop-2.2.0/lib/native/libhadoop.a: current ar archive hadoop-2.2.0/lib/native/libhadooppipes.a: current ar archive hadoop-2.2.0/lib/native/libhadoop.so: symbolic link to `libhadoop.so.1.0.0' hadoop-2.2.0/lib/native/libhadoop.so.1.0.0: ELF 32-bit LSB shared object, Intel 80386, version 1 (SYSV), dynamically linked, not stripped hadoop-2.2.0/lib/native/libhadooputils.a: current ar archive hadoop-2.2.0/lib/native/libhdfs.a: current ar archive hadoop-2.2.0/lib/native/libhdfs.so: symbolic link to `libhdfs.so.0.0.0' hadoop-2.2.0/lib/native/libhdfs.so.0.0.0: ELF 32-bit LSB shared object, Intel 80386, version 1 (SYSV), dynamically linked, not stripped Step3:编译安装之后,在此查看 [root@cloud003 hduser]# file /usr/local/hadoop-2.2.0/lib/native/* #看到没64bit了 /usr/local/hadoop-2.2.0/lib/native/libhadoop.a: current ar archive /usr/local/hadoop-2.2.0/lib/native/libhadooppipes.a: current ar archive /usr/local/hadoop-2.2.0/lib/native/libhadoop.so: symbolic link to `libhadoop.so.1.0.0' /usr/local/hadoop-2.2.0/lib/native/libhadoop.so.1.0.0: ELF 64-bit LSB shared object, x86-64, version 1 (SYSV), dynamically linked, not stripped /usr/local/hadoop-2.2.0/lib/native/libhadooputils.a: current ar archive /usr/local/hadoop-2.2.0/lib/native/libhdfs.a: current ar archive /usr/local/hadoop-2.2.0/lib/native/libhdfs.so: symbolic link to `libhdfs.so.0.0.0' /usr/local/hadoop-2.2.0/lib/native/libhdfs.so.0.0.0: ELF 64-bit LSB shared object, x86-64, version 1 (SYSV), dynamically linked, not stripped [root@cloud003 hduser]# Step4:加入环境变量以及更改配置 #加入HADOOP_HOME [root@cloud003 hduser]# vi ~/.bash_profile ## export JAVA_HOME JDK ## export JAVA_HOME="/usr/java/jdk1.6.0_45" ## export JAVA_HOME JRE ## ##export JAVA_HOME="/usr/java/jre1.6.0_45" export CLASSPATH=.:$JAVA_HOME/lib/tools.jar:$JAVA_HOME/lib/dt.jar export ANT_HOME=/usr/local/apache-ant-1.9.2 export FINDBUGS_HOME=/usr/local/findbugs-2.0.2 export HADOOP_HOME=/usr/local/hadoop-2.2.0 PATH=$JAVA_HOME/bin:$ANT_HOME/bin:$FINDBUGS_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH export PATH 使添加的变量生效 [root@cloud003 hduser]# source ~/.bash_profile 添加目录结构:

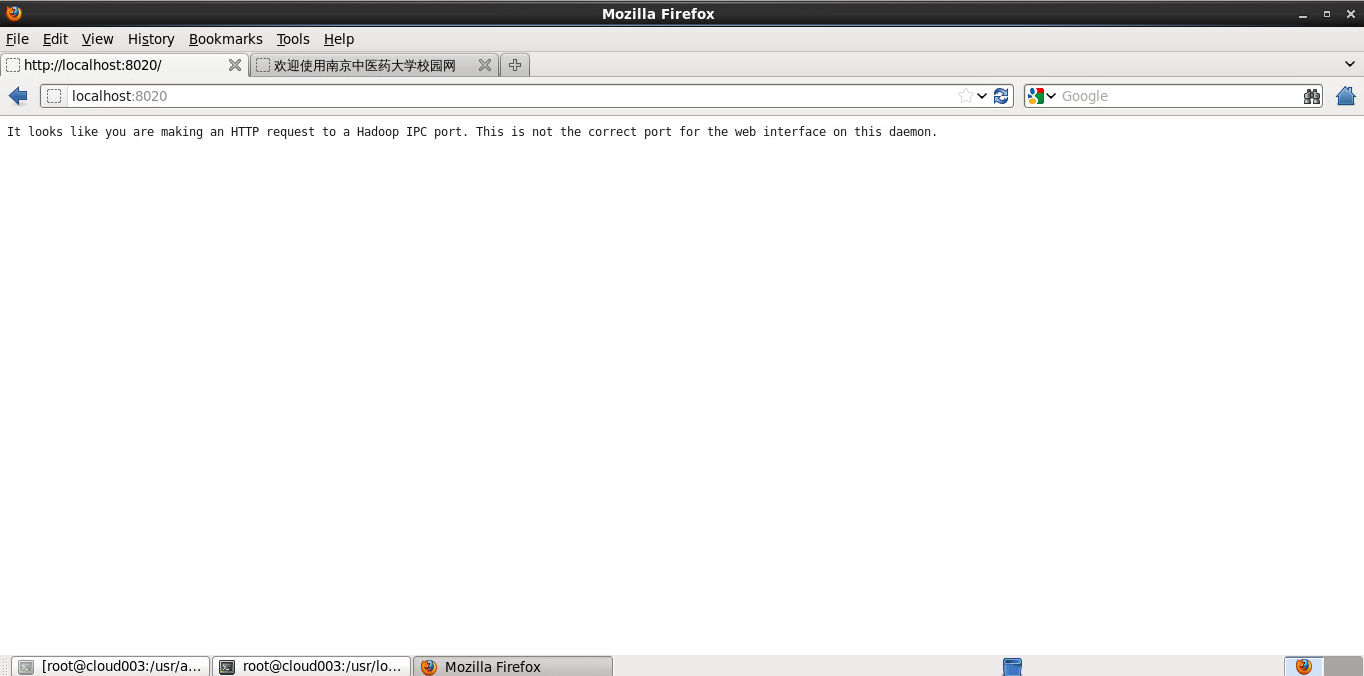

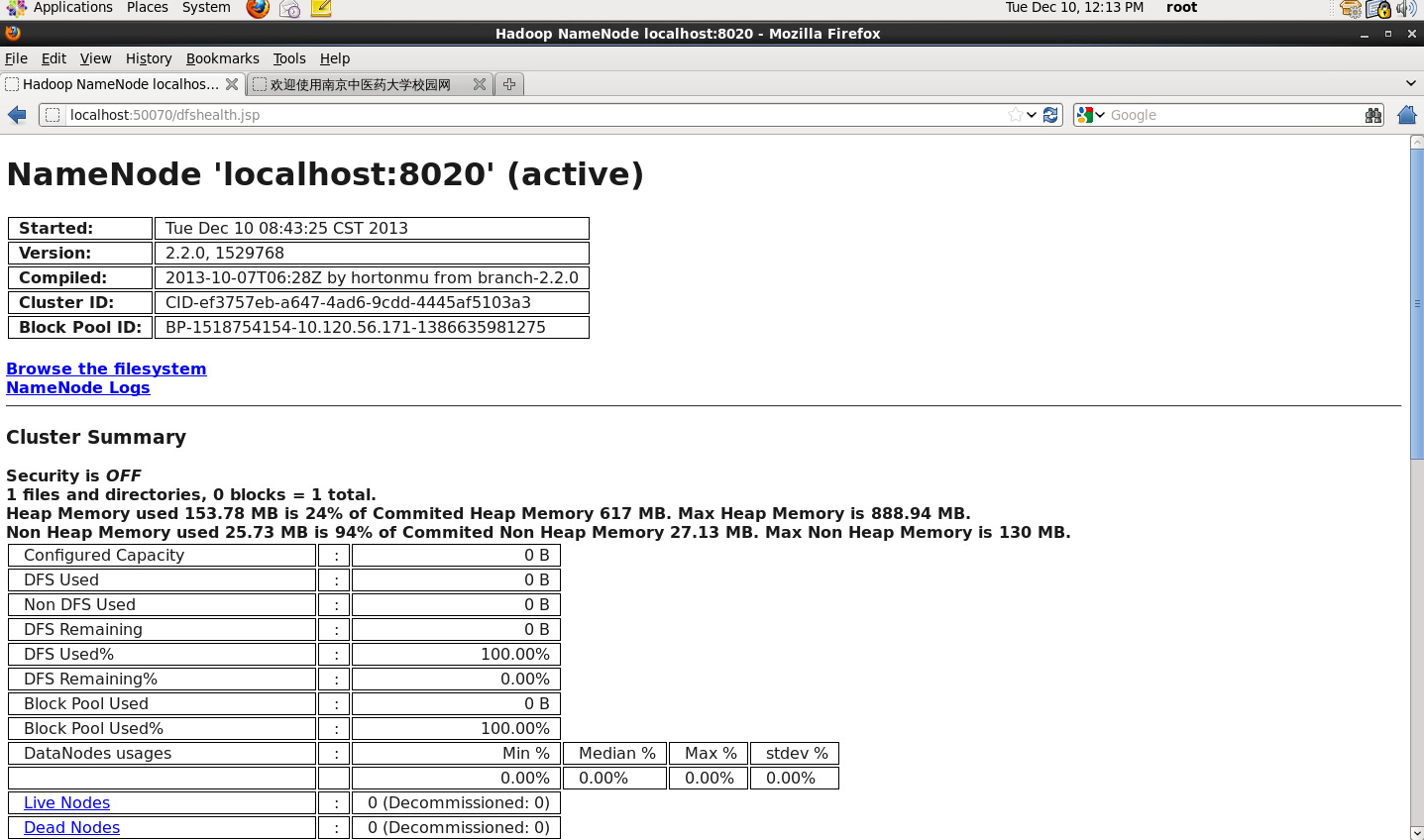

修改配置文件: vi etc/hadoop/hdfs-site.xml #参考官方单节点配置文档http://hadoop.apache.org/docs/r2.2.0/hadoop-project-dist/hadoop-common/SingleCluster.html vi etc/hadoop/core-site.xml cp etc/hadoop/mapred-site.xml.template etc/hadoop/mapred-site.xml vi etc/hadoop/mapred-site.xml vi etc/hadoop/yarn-site.xml 将hadoop-env.sh 中的JAVA_HOME制定到你系统的jdk路径 [root@cloud003 hadoop-2.2.0]# vi etc/hadoop/hadoop-env.sh # Copyright 2011 The Apache Software Foundation # # Licensed to the Apache Software Foundation (ASF) under one # or more contributor license agreements. See the NOTICE file # distributed with this work for additional information # regarding copyright ownership. The ASF licenses this file # to you under the Apache License, Version 2.0 (the # "License"); you may not use this file except in compliance # with the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. # Set Hadoop-specific environment variables here. # The only required environment variable is JAVA_HOME. All others are # optional. When running a distributed configuration it is best to # set JAVA_HOME in this file, so that it is correctly defined on # remote nodes. # The java implementation to use. export JAVA_HOME=/usr/java/jdk1.6.0_45 #修改为你的JDK路径 # The jsvc implementation to use. Jsvc is required to run secure datanodes. #export JSVC_HOME=${JSVC_HOME} export HADOOP_CONF_DIR=${HADOOP_CONF_DIR:-"/etc/hadoop"} 上述改完之后:格式化namenode [root@cloud003 hadoop-2.2.0]# hdfs namenode -format ..................... ..................... ..................... [root@cloud003 hadoop-2.2.0]# hadoop-daemon.sh start namenode starting namenode, logging to /usr/local/hadoop-2.2.0/logs/hadoop-root-namenode-cloud003.out [root@cloud003 hadoop-2.2.0]# hadoop-daemon.sh start datanode starting datanode, logging to /usr/local/hadoop-2.2.0/logs/hadoop-root-datanode-cloud003.out [root@cloud003 hadoop-2.2.0]# yarn-daemon.sh start resourcemanager starting resourcemanager, logging to /usr/local/hadoop-2.2.0/logs/yarn-root-resourcemanager-cloud003.out [root@cloud003 hadoop-2.2.0]# yarn-daemon.sh start manager starting manager, logging to /usr/local/hadoop-2.2.0/logs/yarn-root-manager-cloud003.out [root@cloud003 hadoop-2.2.0]# start-yarn.sh starting yarn daemons resourcemanager running as process 870. Stop it first. The authenticity of host 'localhost (::1)' can't be established. RSA key fingerprint is 45:9f:56:fd:79:b8:be:18:86:3e:3f:a7:bf:90:2f:6c. Are you sure you want to continue connecting (yes/no)? y Please type 'yes' or 'no': yes localhost: Warning: Permanently added 'localhost' (RSA) to the list of known hosts. localhost: starting nodemanager, logging to /usr/local/hadoop-2.2.0/logs/yarn-root-nodemanager-cloud003.out [root@cloud003 hadoop-2.2.0]# jps 1253 NodeManager 870 ResourceManager 1351 Jps 31069 DataNode 725 NameNode 测试:

查看resourcemanager上cluster运行状态(hadoop资源管理页面)

查看节点状态:

FireFox进入: http://localhost:50070 |